MediaPipe 0.8 : Colab : MediaPipe ハンド (Hands) (翻訳/解説)

翻訳 : (株)クラスキャット セールスインフォメーション

作成日時 : 03/08/2021 (0.8.3)

* 本ページは、MediaPipe の以下のドキュメントを翻訳した上で適宜、補足説明したものです:

* サンプルコードの動作確認はしておりますが、必要な場合には適宜、追加改変しています。

* ご自由にリンクを張って頂いてかまいませんが、sales-info@classcat.com までご一報いただけると嬉しいです。

- お住まいの地域に関係なく Web ブラウザからご参加頂けます。事前登録 が必要ですのでご注意ください。

- Windows PC のブラウザからご参加が可能です。スマートデバイスもご利用可能です。

| 人工知能研究開発支援 | 人工知能研修サービス | テレワーク & オンライン授業を支援 |

| PoC(概念実証)を失敗させないための支援 (本支援はセミナーに参加しアンケートに回答した方を対象としています。) | ||

◆ お問合せ : 本件に関するお問い合わせ先は下記までお願いいたします。

| 株式会社クラスキャット セールス・マーケティング本部 セールス・インフォメーション |

| E-Mail:sales-info@classcat.com ; WebSite: https://www.classcat.com/ |

| Facebook: https://www.facebook.com/ClassCatJP/ |

MediaPipe 0.8 : Colab : MediaPipe ハンド (Hands)

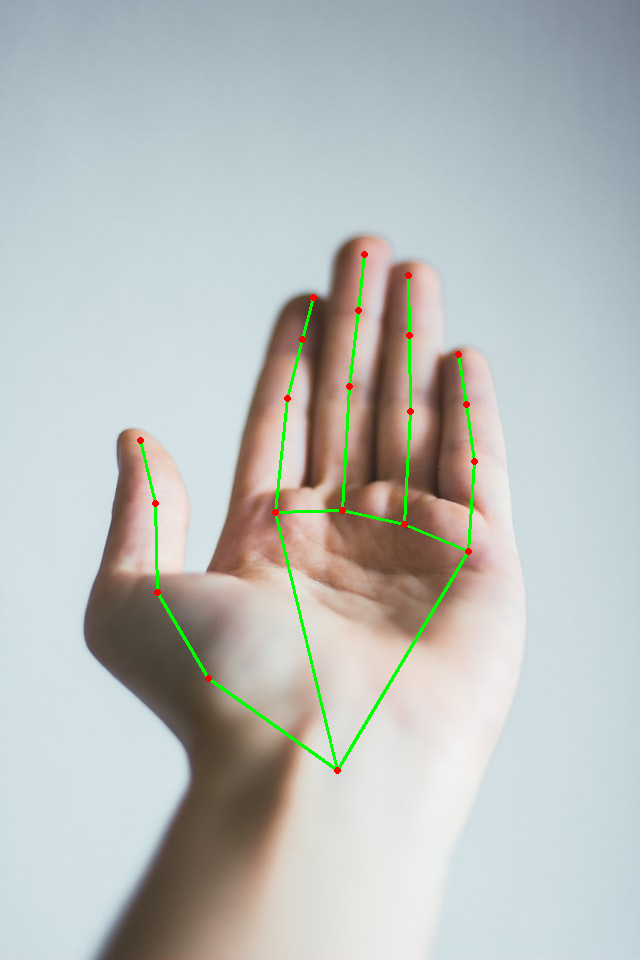

Python の MediaPipe ハンド・ソリューション API の使用サンプルです (http://solutions.mediapipe.dev/hands もまた見てください)。

!pip install mediapipe

ハンド (手) を含む任意の画像を Colab にアップロードします。web: https://unsplash.com/photos/QyCH5jwrD_A と https://unsplash.com/photos/tSePVHkxUCk から 2 つのサンプル画像を取ります。

from google.colab import files

uploaded = files.upload()

import cv2

from google.colab.patches import cv2_imshow

# Read images with OpenCV.

images = {name: cv2.imread(name) for name in uploaded.keys()}

# Preview the images.

for name, image in images.items():

print(name)

cv2_imshow(image)

|

|

総ての MediaPipe ソリューション Python API サンプルは mp.solutions 下にあります。

MediaPipe ハンド・ソリューションについては、mp_hands = mp.solutions.hands としてこのモジュールにアクセスできます。

初期化の間に static_image_mode, max_num_hands と min_detection_confidence のようなパラメータを変更しても良いです。パラメータについてのより多くの情報を得るためには help(mp_hands.Hands) を実行します。

import mediapipe as mp

mp_hands = mp.solutions.hands

help(mp_hands.Hands)

Help on class Hands in module mediapipe.python.solutions.hands: class Hands(mediapipe.python.solution_base.SolutionBase) | Hands(static_image_mode=False, max_num_hands=2, min_detection_confidence=0.5, min_tracking_confidence=0.5) | | MediaPipe Hands. | | MediaPipe Hands processes an RGB image and returns the hand landmarks and | handedness (left v.s. right hand) of each detected hand. | | Note that it determines handedness assuming the input image is mirrored, | i.e., taken with a front-facing/selfie camera ( | https://en.wikipedia.org/wiki/Front-facing_camera) with images flipped | horizontally. If that is not the case, use, for instance, cv2.flip(image, 1) | to flip the image first for a correct handedness output. | | Please refer to https://solutions.mediapipe.dev/hands#python-solution-api for | usage examples. | | Method resolution order: | Hands | mediapipe.python.solution_base.SolutionBase | builtins.object | | Methods defined here: | | __init__(self, static_image_mode=False, max_num_hands=2, min_detection_confidence=0.5, min_tracking_confidence=0.5) | Initializes a MediaPipe Hand object. | | Args: | static_image_mode: Whether to treat the input images as a batch of static | and possibly unrelated images, or a video stream. See details in | https://solutions.mediapipe.dev/hands#static_image_mode. | max_num_hands: Maximum number of hands to detect. See details in | https://solutions.mediapipe.dev/hands#max_num_hands. | min_detection_confidence: Minimum confidence value ([0.0, 1.0]) for hand | detection to be considered successful. See details in | https://solutions.mediapipe.dev/hands#min_detection_confidence. | min_tracking_confidence: Minimum confidence value ([0.0, 1.0]) for the | hand landmarks to be considered tracked successfully. See details in | https://solutions.mediapipe.dev/hands#min_tracking_confidence. | | process(self, image: numpy.ndarray) ->| Processes an RGB image and returns the hand landmarks and handedness of each detected hand. | | Args: | image: An RGB image represented as a numpy ndarray. | | Raises: | RuntimeError: If the underlying graph throws any error. | ValueError: If the input image is not three channel RGB. | | Returns: | A NamedTuple object with two fields: a "multi_hand_landmarks" field that | contains the hand landmarks on each detected hand and a "multi_handedness" | field that contains the handedness (left v.s. right hand) of the detected | hand. | | ---------------------------------------------------------------------- | Methods inherited from mediapipe.python.solution_base.SolutionBase: | | __enter__(self) | A "with" statement support. | | __exit__(self, exc_type, exc_val, exc_tb) | Closes all the input sources and the graph. | | close(self) -> None | Closes all the input sources and the graph. | | ---------------------------------------------------------------------- | Data descriptors inherited from mediapipe.python.solution_base.SolutionBase: | | __dict__ | dictionary for instance variables (if defined) | | __weakref__ | list of weak references to the object (if defined)

# Prepare DrawingSpec for drawing the face landmarks later.

mp_drawing = mp.solutions.drawing_utils

drawing_spec = mp_drawing.DrawingSpec(thickness=1, circle_radius=1)

mp_drawing = mp.solutions.drawing_utils

with mp_hands.Hands(

static_image_mode=True,

max_num_hands=2,

min_detection_confidence=0.7) as hands:

for name, image in images.items():

# Convert the BGR image to RGB, flip the image around y-axis for correct

# handedness output and process it with MediaPipe Hands.

results = hands.process(cv2.flip(cv2.cvtColor(image, cv2.COLOR_BGR2RGB), 1))

image_hight, image_width, _ = image.shape

# Print handedness (left v.s. right hand).

print(f'Handedness of {name}:')

print(results.multi_handedness)

# Draw hand landmarks of each hand.

print(f'Hand landmarks of {name}:')

if not results.multi_hand_landmarks:

continue

annotated_image = cv2.flip(image.copy(), 1)

for hand_landmarks in results.multi_hand_landmarks:

# Print index finger tip coordinates.

print(

f'Index finger tip coordinate: (',

f'{hand_landmarks.landmark[mp_hands.HandLandmark.INDEX_FINGER_TIP].x * image_width}, '

f'{hand_landmarks.landmark[mp_hands.HandLandmark.INDEX_FINGER_TIP].y * image_hight})'

)

mp_drawing.draw_landmarks(

annotated_image, hand_landmarks, mp_hands.HAND_CONNECTIONS)

cv2_imshow(cv2.flip(annotated_image, 1))

以上